GPT-5.4 Mini and Nano: The New Kings of Small Models

GPT-5.4 Mini scores 60% on Terminal-Bench 2.0 at just $0.75/1M input. GPT-5.4 Nano costs $0.20/1M. Both are available now on Smart AIPI at 75% off — here's how to use them for agents, coding, and computer use.

TL;DR: GPT-5.4 Mini and Nano are live on Smart AIPI. Mini scores 60% on Terminal-Bench 2.0 at $0.75/1M input. Nano costs just $0.20/1M. Both are available at 75% off. Use gpt-5.4-mini and gpt-5.4-nano in any API call.

OpenAI just dropped two new models in the 5.4 family, and they change the economics of AI development. GPT-5.4 Mini brings near-frontier coding ability at a fraction of the price. GPT-5.4 Nano is the cheapest frontier model ever released.

Both are live on Smart AIPI right now.

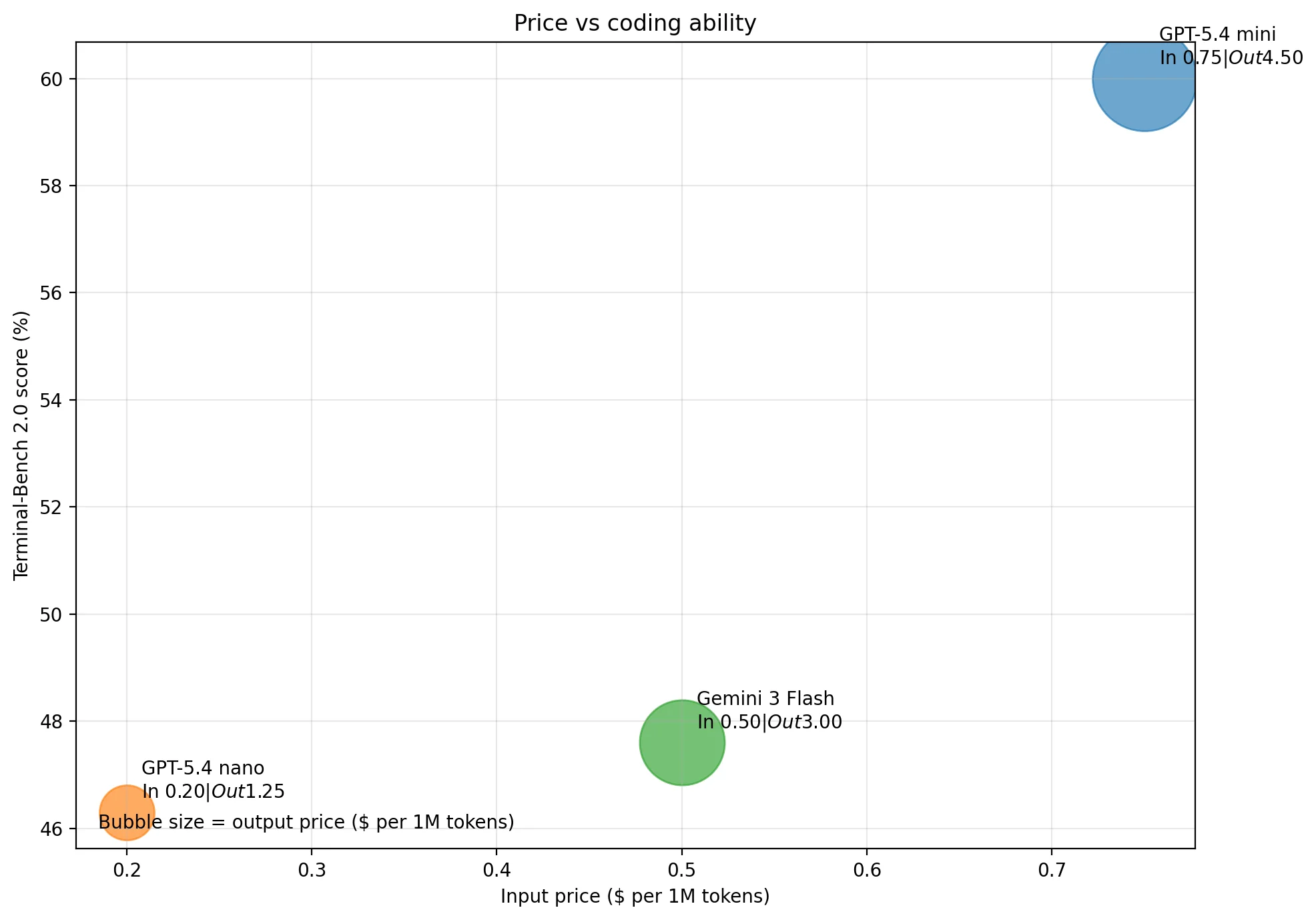

The Numbers

Here's how the new models compare on price and performance:

| Model | Terminal-Bench 2.0 | Input /1M | Output /1M | Smart AIPI Output |

|---|---|---|---|---|

| GPT-5.4 Mini | 60.0% | $0.75 | $4.50 | $1.125 |

| GPT-5.4 Nano | 46.2% | $0.20 | $1.25 | $0.3125 |

| Gemini 3 Flash | 47.7% | $0.50 | $3.00 | N/A |

| GPT-5.4 (full) | 72.1% | $2.50 | $15.00 | $3.75 |

GPT-5.4 Mini beats Gemini 3 Flash on coding by 12+ points while costing only 50% more on input. And on Smart AIPI, that gap narrows further — you're paying $0.1875/1M input instead of Gemini's $0.50.

When to Use Which Model

The 5.4 family now gives you three tiers to match your workload:

GPT-5.4 Mini: The Subagent Workhorse

This is the model you want for parallelized coding tasks. If you're running Codex, Claude Code, or any agentic coding tool that spawns subagents, Mini is the sweet spot.

The subagent cost play:

- Run your main agent on

gpt-5.4with high reasoning for architecture decisions - Spawn subagents on

gpt-5.4-miniwith high reasoning for implementation tasks - Each subagent costs ~70% less than running on the full model, while still scoring 60% on Terminal-Bench

- 10 parallel subagents on Mini costs less than 3 on GPT-5.4 — and finishes faster

Mini also excels at computer use and browser automation. It delivers solid accuracy on UI interaction tasks at much faster speeds than GPT-5.4, making it the right choice for web scraping agents, automated testing, and any workflow where you need the model to navigate screens and click buttons without burning through your budget.

Codex CLI tip: Set model = "gpt-5.4-mini" in ~/.codex/config.toml with model_reasoning_effort = "high" for a fast, capable coding assistant that won't drain your credits.

GPT-5.4 Nano: The Everyday Workhorse

At $0.20/1M input (or $0.05 on Smart AIPI), Nano is practically free. It's not a coding model — but it doesn't need to be. Use it for everything else:

- Classification and routing — decide which model or tool to invoke

- Summarization — condense documents, conversations, search results

- Data extraction — pull structured fields from unstructured text

- Basic agentic tasks — simple multi-step workflows that don't involve code generation

- Conversation titles — we use it ourselves for generating chat titles

- Content moderation — fast, cheap content filtering at scale

Nano handles tasks that previously required GPT-4.1 Mini or GPT-5 Mini but at a fraction of the cost. For high-throughput pipelines processing millions of requests, the savings are massive.

Note: GPT-5.4 Nano currently only supports the Chat Completions endpoint (/v1/chat/completions). The Responses API is not yet available for this model. If you're using tools like Codex CLI that require the Responses endpoint, use Mini instead.

The Smart Model Stack

Here's the pattern we recommend for production AI applications:

| Task | Model | Why |

|---|---|---|

| Architecture, complex reasoning | gpt-5.4 | Best-in-class quality for critical decisions |

| Coding subagents, code review, computer use | gpt-5.4-mini | 60% Terminal-Bench at 70% less cost than 5.4 |

| Routing, classification, summaries, extraction | gpt-5.4-nano | Near-free at $0.05/1M input on Smart AIPI |

| Image generation and editing | gpt-image-1.5 | Frontier image quality |

| Video generation | sora-2 | Up to 20s video from text or image |

Quick Start

Both models work with any OpenAI SDK. Just set the model ID:

Pricing on Smart AIPI

As always, 75% off OpenAI direct pricing:

| Model | Input /1M | Cached Input /1M | Output /1M |

|---|---|---|---|

| GPT-5.4 Mini | $0.1875 | $0.01875 | $1.125 |

| GPT-5.4 Nano | $0.05 | $0.005 | $0.3125 |

With prompt caching (automatic, no setup needed), long-running agents with consistent system prompts see 30-50% cache hit rates — making Mini even cheaper for agentic workloads.

Get Started

Both models are available right now. No waitlist, no special access needed.

- Sign up for a free Smart AIPI account (includes $5 free credits)

- Set your base URL to

https://api.smartaipi.com/v1 - Use model

gpt-5.4-miniorgpt-5.4-nano - Or try them instantly at chat.smartaipi.com

The era of expensive AI is over. Build more, spend less.

OpenAI-compatible API gateway. Access frontier AI models at 75% less cost.

Start for free